AI Governance: A Top Priority for Financial Firms in Singapore

Introduction to AI Risk Management in Financial Institutions

The integration of artificial intelligence (AI) into the financial sector has brought about significant changes, prompting regulatory bodies and industry leaders to emphasize the importance of robust AI risk management. Recently, the Monetary Authority of Singapore (MAS) released its guidelines on AI risk management, marking a pivotal step in ensuring that AI is deployed safely and effectively within financial institutions.

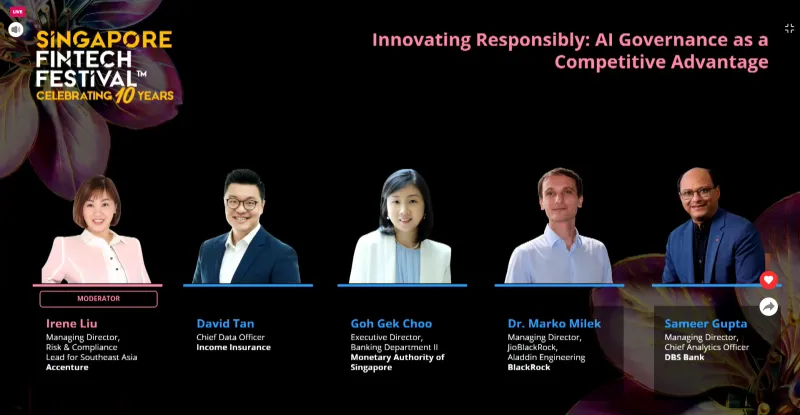

At the Singapore Fintech Festival, panellists highlighted the necessity of AI governance as a critical component of every financial institution's strategy. The discussion underscored the need for an evolving framework that adapts to the dynamic nature of AI systems. This approach ensures that institutions can harness the benefits of AI while mitigating potential risks.

Key Areas of Focus in AI Governance

Goh Gek Choo, executive director of the Banking Department II at MAS, emphasized that the guidelines were developed based on years of prior work. She noted that the industry has been seeking greater clarity and regulatory certainty as AI adoption increases. Strong governance is essential to support safe deployment, allowing institutions to progress confidently.

One of the key areas where MAS sought balance was ensuring clarity and flexibility. The guidelines have moved beyond previous principles, providing actionable steps for implementation. They are designed to be flexible, accommodating financial institutions of varying sizes and risk profiles. This proportionate approach allows each institution to tailor the guidelines to their specific needs.

Another critical aspect is addressing current AI-related risks while preparing for future challenges. The guidelines are anchored on existing supervisory expectations, covering oversight, risk management, policies, frameworks, lifecycle controls, and capabilities. However, they also highlight additional considerations specific to AI deployment, such as the emergence of AI agents that could introduce new vulnerabilities.

Industry Perspectives on AI Governance

Sameer Gupta, managing director and chief analytics officer at DBS Bank, welcomed the release of the guidelines. He highlighted that AI is now core to DBS’s operations, with the bank aiming to deliver nearly $1 billion in value from AI this year. Gupta explained that the bank uses A/B testing to assess the impact of AI-driven interventions, emphasizing the importance of strong governance in scaling AI use safely.

David Tan, chief data officer at Income Insurance, noted that the growing use of embedded AI within core systems has made governance more complex. He stressed the importance of grounding the organization's knowledge base to ensure that non-deterministic outcomes from AI systems are contextually relevant. Tan also highlighted the need for industry collaboration to reduce ambiguity and clarify principles, risks, and shared methodologies.

Marko Milek, managing director at BlackRock, mentioned that the handbook has reinforced and aligned the firm’s existing governance practices. BlackRock has long applied AI across its business, embedding governance as a horizontal layer across all functions. While many AI risks are already addressed through technology, vendor, and data controls, newer challenges like hallucinations in generative models require additional safeguards. Milek emphasized the importance of human-in-the-loop controls and maintaining a "walled garden" approach to protect proprietary data.

Challenges and Future Considerations

Goh also addressed the caution she would give to the industry, emphasizing that AI risk management must be adaptive. Even if all necessary steps are taken before AI deployment, ongoing monitoring is crucial to address model performance degradation due to data drifts. Continuous oversight, a strong risk culture, and ongoing training are essential to ensure staff understand which tools are allowed and how to use AI safely.

The increasing trend of AI democratization and user empowerment within financial institutions poses risks such as shadow IT or shadow AI, which could lead to data compromise. Goh stressed the need for continuous oversight and a strong risk culture to mitigate these risks effectively.

Conclusion

The release of MAS's guidelines on AI risk management marks a significant milestone in the financial sector's journey towards responsible AI adoption. By focusing on clarity, flexibility, and adaptability, these guidelines aim to support innovation while ensuring safety and compliance. As the industry continues to evolve, the importance of robust AI governance cannot be overstated. It is a critical factor in enabling financial institutions to harness the full potential of AI while safeguarding against emerging risks.