Teacher's Shock as Struggling Students Suddenly Ace Their Work

The Rise of AI in Education and the New Challenges for Teachers

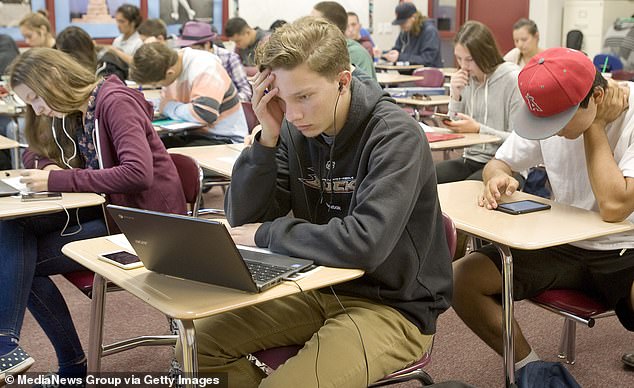

In a Los Angeles high school, an English teacher named Dustin Stevenson found himself facing an unexpected dilemma during a routine grading session. His lowest-performing students suddenly began submitting essays that were flawless and received A grades—something that was completely out of the ordinary. What started as a mystery quickly turned into a revelation when one of his students disclosed the secret.

The source of this sudden academic improvement wasn't an underground website or a secret group chat. Instead, it was Google Lens, a tool embedded directly into every student's school-issued Chromebook. Students could simply hover over a test or essay question and receive instant AI-generated answers without even switching tabs or typing a single word.

Stevenson expressed his disbelief to Mercury News, stating, "It's hard enough to teach in the age of AI, and now we have to navigate this?" For educators like Stevenson, this discovery marked a turning point in what they describe as an escalating battle against invisible academic dishonesty. What once was a tool meant for identifying plants or translating signs had transformed into an academic disruptor.

The latest version of Google Lens allows a floating AI 'bubble' to appear on screen, scanning and interpreting anything within its range. This new feature has made it increasingly difficult for teachers to detect cheating. While traditional methods such as catching students glancing at phones or whispering answers are becoming less effective, the new challenge is a tool that operates in plain sight under the guise of legitimate technology.

AI-assisted cheating has become one of the fastest-growing concerns in classrooms across America. A survey by the Center for Democracy and Technology revealed that more than 70 percent of teachers now doubt whether their students' work is truly their own. Nearly three-quarters said they worry that an over-reliance on AI is damaging students' ability to think, write, and research independently.

The problem isn't limited to Google Lens. AI models like ChatGPT, Gemini, and Copilot have already infiltrated the education system. However, Lens’s integration into Google's ecosystem makes it especially challenging to police. After Stevenson reported the cheating, Lens quietly disappeared from his students' Chromebooks. While he found it encouraging, he also noted that it highlights how haphazard the introduction of AI has been.

William Heuisler, an ethnic studies teacher in Los Angeles, shared similar concerns. He pointed out that the shift began when schools distributed Chromebooks to millions of students during the pandemic. “After COVID-19, it was clear that Chromebooks were a terrible idea in my classroom,” Heuisler said. “Students used them to play games, watch soccer matches, and do anything but focus.” Once AI arrived, he decided to eliminate laptops altogether.

New research supports these fears. A 2024 study from the Massachusetts Institute of Technology titled "Your Brain on ChatGPT" found that students who used AI to write essays showed far less brain activity than those who wrote unaided. Many couldn’t even recall details from their own papers. Their writing was flatter and more formulaic with limited vocabulary and simplistic sentence structures.

Despite these concerns, about 85 percent of teachers and students now use AI in some capacity, according to the same report. Teachers rely on it for grading and planning lessons, while students use it for brainstorming or research and, increasingly, for shortcuts.

The rules on using AI in schoolwork remain unclear. The California Department of Education provides suggestions for ethical AI use but no statewide policy. One state-produced video even encourages teachers not to punish students caught using AI but instead to redesign assignments that can't easily be answered by a machine. This lack of clarity is precisely the problem, said Alix Gallagher, a director at Policy Analysis for California Education.

“Because adults aren't clear, it's actually not surprising that kids aren't clear,” Gallagher said. “It's adults' responsibility to fix that, and if adults don't get on the same page, they will make it harder for kids who actually want to do the 'right' thing.”

A RAND survey found only 34 percent of teachers reported consistent AI policies in their schools, while 80 percent of students said no one had explained how to use AI responsibly. Hillary Freeman, a government teacher at Piedmont High near Oakland, said her classroom rules are simple: use AI, get a zero. She allows it only when specifically authorized for narrow purposes, like summarizing complex topics.

“Reasoning, logic, problem-solving, writing – these are skills students need,” Freeman said. “I fear we're going to have a generation with huge cognitive gaps in critical thinking. … It's really concerning to me.” Even enforcing that rule, she said, has become a huge addition to her job.

Google insists it is acting responsibly. “Students have told us they value tools that help them learn and understand things visually, so we have been running tests offering an easier way to access Lens while browsing,” said Craig Ewer, a Google spokesperson. “We continue to work closely with educators and partners to improve the helpfulness of our tools that support the learning process.”

The company has invested more than $40 million in AI literacy for students and teachers and recently paused a 'homework help' button within Lens after feedback from users. Meanwhile, the Los Angeles Unified School District (LAUSD) has opted to keep Lens enabled on student Chromebooks. A spokesperson said the district weighs both 'the risks and benefits' and allows the feature only for students who have completed a digital literacy lesson.

As AI continues to evolve, the debate over its role in education remains ongoing. Is AI a lifeline or a looming threat to students' critical thinking skills? How will AI shape the future of education, with tech giants like Google and Microsoft setting safety standards for classroom innovation? Could AI be hindering schoolwork skills rather than helping them? These questions are at the forefront of discussions among educators, policymakers, and parents alike.